How to run Unifi controller in AWS with a small (1Gb) memory footprint

Posted on Wed 08 September 2021 in tech

For years, I have been hosting Unifi controller in AWS. AWS is both more convenient and reliable than hosting a local server. I am also convinced that hosting a minimalist server is about the same cost on AWS as running locally. In the past, I have purchased a one year reservation to keep my server costs as low as possible. In AWS, that generally results in at least a 40% discount over spot prices.

However this year I invested in a 3 year capacity reservation in hopes to trim even more of the cost. Ironically and unfortunately at the beginning of this year, 2021, Unifi released a major upgrade to the controller software which in my experience dramatically increased the memory requirements for my controller, but needlessly so. As a result my capacity reservation, an AWS t3.micro, 1GB, 2 VCPU has run out of memory nearly every other day in 2021.

I have worked for months to try to debug the issue but I have failed to resolve all of the technical challenges until now. I have found there are several steps that can be used to get Unifi memory utilization paired down and under control.

How to free up memory for Unifi controller running on an Ubuntu container in AWS

Limit the memory used by the Unifi java application to 264Mb

This is achieved by setting the properties unifi.xms and unifi.xmx in /var/lib/unifi/system.properties. I determined the amount, 264Mb, experimentally by trial an error. This is the maximum amount I have found that is required to run a stable server for my setup.

Mine looks like this:

unifi.xms=125

unifi.xmx=264

I have found that my unifi controller only requires a very small amount of memory for my network. This is a home network with less than 5 devices. If your installation requires more memory you can alter the xmx to get the amount required. Don't give it more memory than is required in the vain hope that it will perform better.

Limit the amount of memory used by the Wired Tiger Cache in mongodb

This is also a setting in /var/lib/unifi/system.properties

unifi.db.extraargs=--wiredTigerCacheSizeGB\=0.125

This limits mongodb to 125Mb cache. I have not noticed any degradation in performance with this change. I suspect having a large dedicated mongo cache is not a requirement for unifi.

Prevent an 'extra' mongodb from starting up on system boot

Thats right, there is a duplicate mongodb started by default. Unifi requires the mongodb package, however it does not use the 'system' mongodb, it starts it's own instance. Therefore the mongodb started by systemctl by the default configuration is a redundant waste of resources.

$ sudo systemctl disable mongodb

$ sudo systemctl stop mongodb

Reduce the amount of memory allowed for Unifi Launcher

By default unifi Launcher is configured to allow 1Gb of RAM. That is ridiculous. It will run on 1/8 that amount.

Launcher memory is configured in /etc/init.d/unifi

Find this line:

JVM_MAX_HEAP_SIZE=1024M

I change it to 256M

JVM_MAX_HEAP_SIZE=256M

I also set the initial memory to 125M and use the Garbage First collector. That is completely optional.

JVM_INIT_HEAP_SIZE=125M

UNIFI_JVM_EXTRA_OPTS=-XX:+UseG1GC

Optional steps to free up additional memory

I manage my own software upgrades. This may not be a best practice for every installation however, in my case I prefer not to be surprised by changes such as controller upgrades that radically increase requrements or mongo changes that suddenly reserve hundreds of megabytes of unused memory. Because I manage my own upgrades I can disable the update daemons and save about 60Mb of memory.

First, disable snapd:

$ sudo systemctl disable snapd

$ sudo systemctl stop snapd

Next, disable unattended-upgrade:

$ sudo systemctl disable unattended-upgrades

$ sudo systemctl stop unattended-upgrades

Conclusion

This massively stripped down system works great for a home Unifi controller running on an AWS t3.micro. At startup it is only using roughly half the available 1Gb of RAM.

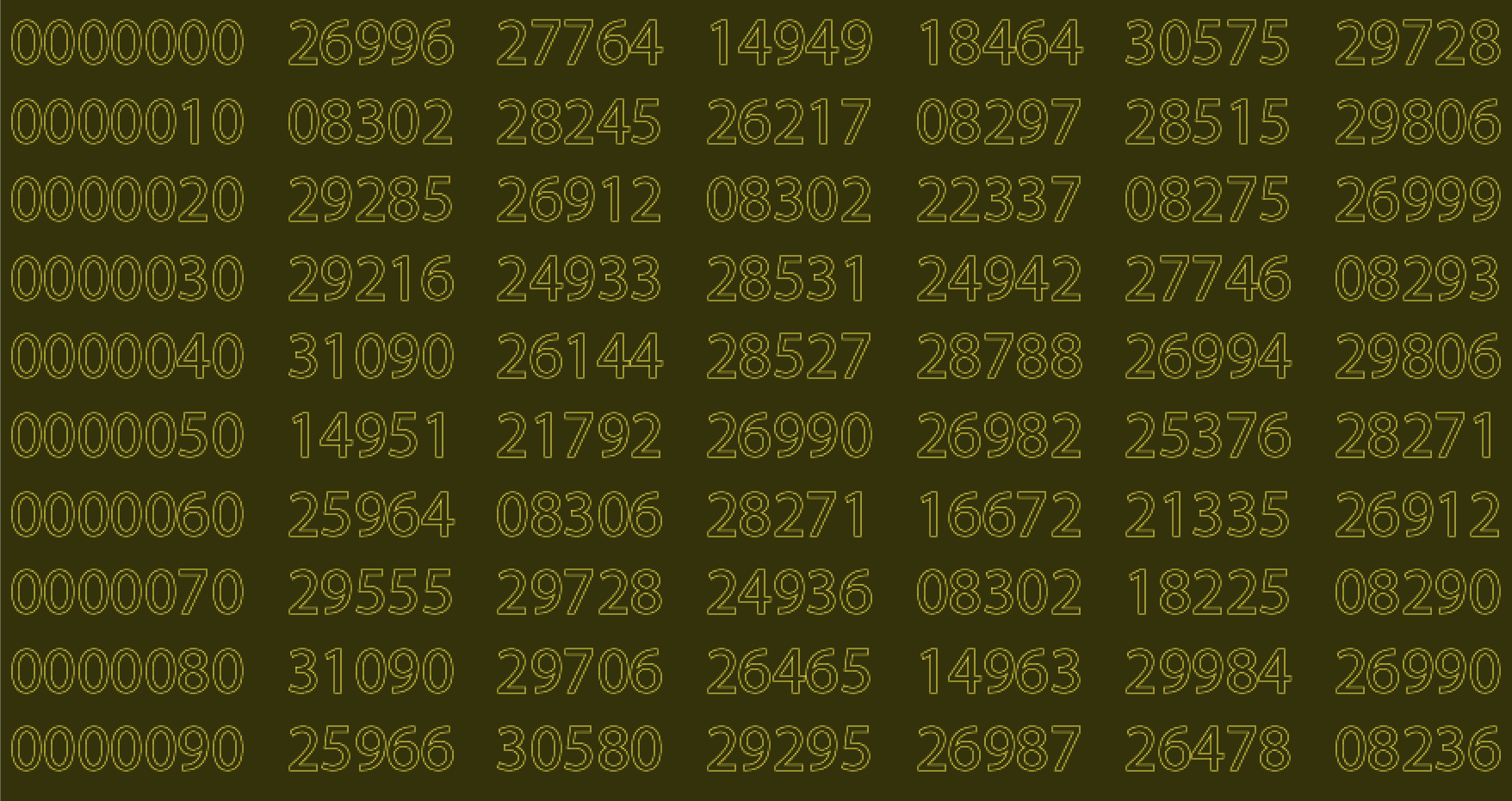

$ free -m

total used free shared buff/cache available

Mem: 953 536 85 0 331 284

Swap: 0 0 0

Success! Unifi and Mongo have been reined in and work well in a 1Gb instance. My long term reservation is less than $3.00 per month. I believe I am saving about half versus hosting my own server while having the added convenience and reliability of AWS.